Smart Feedback Loop

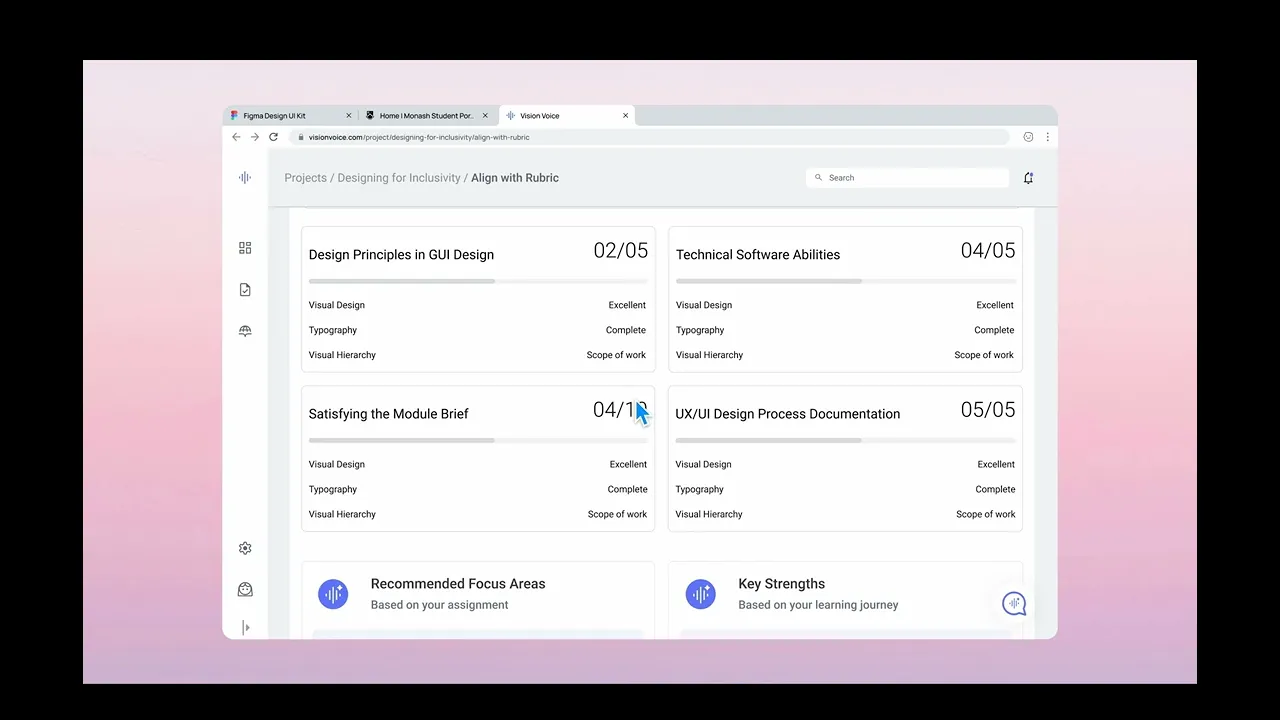

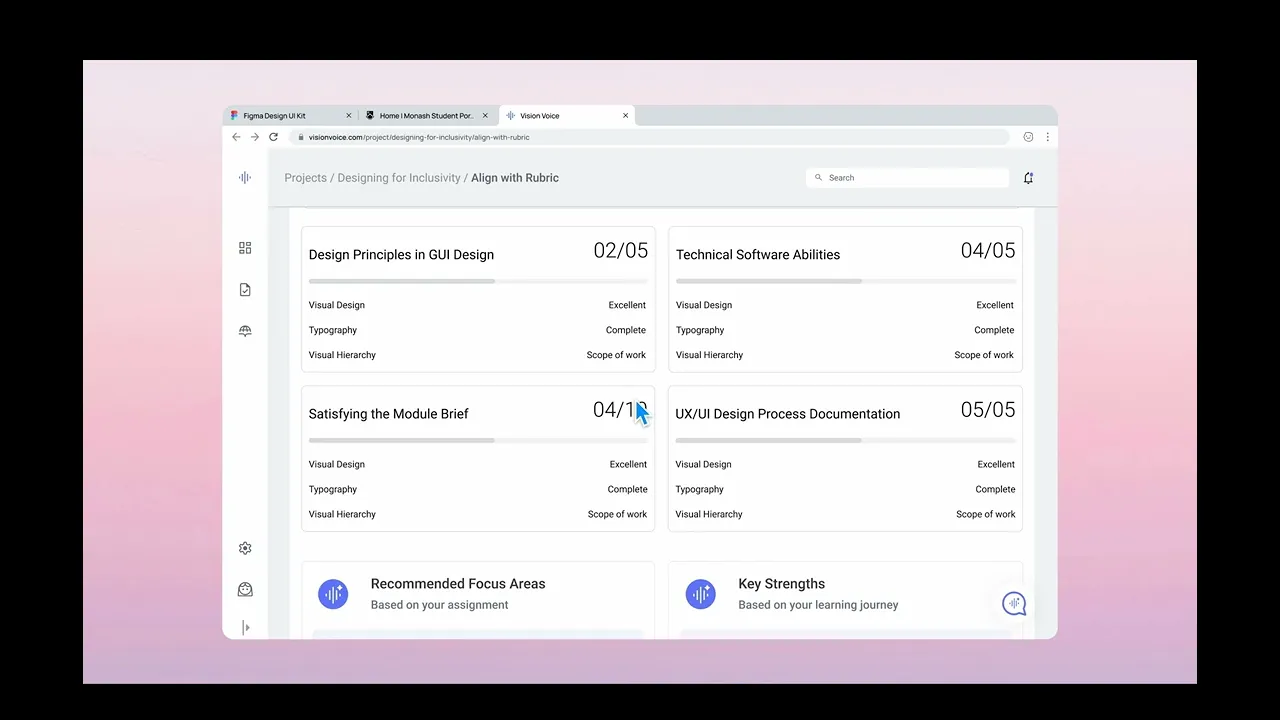

An AI-powered learning platform that delivers real-time, rubric-based critique to design students — closing the 72-hour gap between submission and meaningful feedback.

Title

Smart Feedback Loop

Smart Feedback Loop

Industry

Ed-tech

Ed-tech

Date

2025

2025

Overview:

The goal of this project was to design an AI-powered learning platform that provides real-time, rubric-based feedback to design students, reducing the typical 48–72 hour delay in receiving meaningful critique.The platform focuses on enabling continuous learning by helping students understand, apply, and improve based on structured feedback rather than waiting for manual reviews.

Project Duration:

12 weeks (Capstone Project)

Role:

UI/UX Designer (Team Project)

Led end-to-end UX process (research → design → validation)

Contributed to AI-assisted feature design and user flows

Designed wireframes, prototypes, and high-fidelity interfaces

Tools Used:

Figma (UI/UX Design & Prototyping)

Miro (User flows, journey mapping)

Pen & Paper (Ideation)

Process:

1. Research & Discovery:

Conducted user interviews:

10 design students

2 design mentors

Key Insights:

Delayed feedback slows learning and iteration cycles

Students struggle to interpret and apply qualitative feedback

Lack of structured evaluation criteria (rubrics) creates ambiguity

Defined problem statement:

→ “How might we enable immediate, actionable feedback that supports continuous learning?”

2. Wireframing & Prototyping:

Designed core flows:

Design submission

AI-assisted feedback generation

Feedback interpretation and learning loop

Developed low to high-fidelity wireframes:

Focus on clarity of feedback

Easy navigation between submission, critique, and iteration

Built interactive prototypes to simulate:

Real-time feedback experience

Iteration cycle based on insights

3. Visual Design:

Created a clean, structured interface prioritising:

Readability of feedback

Clear separation of evaluation criteria (rubric-based)

Minimal cognitive load

Designed UI to support:

Layered feedback (AI insights + mentor alignment)

Guided improvement flow

4. Usability Testing:

Tested prototypes with students (5–7 users)

Key Observations:

Users valued immediate feedback over delayed mentor reviews

Structured rubric feedback improved clarity and actionability

Some users needed guidance on interpreting AI suggestions

Iterations Made:

Simplified feedback language and visual grouping

Improved onboarding to explain AI-assisted feedback

Enhanced navigation between feedback and iteration

5. Implementation & Launch:

Delivered high-fidelity designs and interaction flows

Defined how AI integrates as a feedback layer (not a replacement for creativity)

Validated concept through prototype testing

(Note: Full development not in scope)

Results:

(Prototype-based validation)

Reduced perceived feedback wait time from days → immediate (conceptual impact)

~75% of users found feedback more actionable compared to traditional methods

Improved confidence in iteration and self-learning

Key Takeaways:

The speed of feedback directly impacts learning efficiency

Structured, rubric-based critique improves clarity and usability

AI is most effective as an assistive layer, not a replacement for human creativity

Clear guidance is essential when introducing AI-driven features

Next Steps:

Integrate mentor feedback alongside AI insights (hybrid model)

Improve AI explainability for deeper learning

Test with larger user groups for validation

Explore further integration with design tools

Overview:

The goal of this project was to design an AI-powered learning platform that provides real-time, rubric-based feedback to design students, reducing the typical 48–72 hour delay in receiving meaningful critique.The platform focuses on enabling continuous learning by helping students understand, apply, and improve based on structured feedback rather than waiting for manual reviews.

Project Duration:

12 weeks (Capstone Project)

Role:

UI/UX Designer (Team Project)

Led end-to-end UX process (research → design → validation)

Contributed to AI-assisted feature design and user flows

Designed wireframes, prototypes, and high-fidelity interfaces

Tools Used:

Figma (UI/UX Design & Prototyping)

Miro (User flows, journey mapping)

Pen & Paper (Ideation)

Process:

1. Research & Discovery:

Conducted user interviews:

10 design students

2 design mentors

Key Insights:

Delayed feedback slows learning and iteration cycles

Students struggle to interpret and apply qualitative feedback

Lack of structured evaluation criteria (rubrics) creates ambiguity

Defined problem statement:

→ “How might we enable immediate, actionable feedback that supports continuous learning?”

2. Wireframing & Prototyping:

Designed core flows:

Design submission

AI-assisted feedback generation

Feedback interpretation and learning loop

Developed low to high-fidelity wireframes:

Focus on clarity of feedback

Easy navigation between submission, critique, and iteration

Built interactive prototypes to simulate:

Real-time feedback experience

Iteration cycle based on insights

3. Visual Design:

Created a clean, structured interface prioritising:

Readability of feedback

Clear separation of evaluation criteria (rubric-based)

Minimal cognitive load

Designed UI to support:

Layered feedback (AI insights + mentor alignment)

Guided improvement flow

4. Usability Testing:

Tested prototypes with students (5–7 users)

Key Observations:

Users valued immediate feedback over delayed mentor reviews

Structured rubric feedback improved clarity and actionability

Some users needed guidance on interpreting AI suggestions

Iterations Made:

Simplified feedback language and visual grouping

Improved onboarding to explain AI-assisted feedback

Enhanced navigation between feedback and iteration

5. Implementation & Launch:

Delivered high-fidelity designs and interaction flows

Defined how AI integrates as a feedback layer (not a replacement for creativity)

Validated concept through prototype testing

(Note: Full development not in scope)

Results:

(Prototype-based validation)

Reduced perceived feedback wait time from days → immediate (conceptual impact)

~75% of users found feedback more actionable compared to traditional methods

Improved confidence in iteration and self-learning

Key Takeaways:

The speed of feedback directly impacts learning efficiency

Structured, rubric-based critique improves clarity and usability

AI is most effective as an assistive layer, not a replacement for human creativity

Clear guidance is essential when introducing AI-driven features

Next Steps:

Integrate mentor feedback alongside AI insights (hybrid model)

Improve AI explainability for deeper learning

Test with larger user groups for validation

Explore further integration with design tools

Challenge

Design students often experience delays of 48–72 hours in receiving feedback, slowing their learning and iteration process.

Additionally, feedback is often unstructured and difficult to interpret, making it challenging for students to apply insights effectively.

Approach

Designed an AI-assisted platform to deliver real-time, rubric-based feedback while supporting the learning process.

Introduced structured, rubric-driven evaluation for clarity

Designed AI as a support layer to interpret and reinforce mentor feedback

Created a clear feedback visualisation to improve understanding

Enabled iterative learning through quick feedback loops

Focused on maintaining human creativity while enhancing guidance

The platform transforms delayed feedback into an immediate and actionable learning experience.

By combining structured evaluation with AI-assisted insights, it improves clarity, accelerates iteration, and supports continuous skill development.

The platform transforms delayed feedback into an immediate and actionable learning experience.

By combining structured evaluation with AI-assisted insights, it improves clarity, accelerates iteration, and supports continuous skill development.

Projects

Explore more like this one

Selected projects that reflect my approach to design, development, and execution.